The Crash of ’87 was a big deal, though not in the way most people remember. It was a stock market event, obviously, and those are the terms under which it has been understood. That’s not really its legacy, however, as the major shifts that began with Black Monday have had little and most often nothing to do with stocks or share prices.

Alan Greenspan was new to the top job at the FOMC on October 19. He and his fellow policymakers were very much concerned a stock market crash might trigger something along the lines of 1929. They would think that because for them, operating under an expectations regime, it didn’t matter that money and shares had been divorced and separated for decades by then. The Fed in its moneyless modern incarnation worried about how people might feel about the market chaos, and then act on those feelings alone.

Wall Street itself was shaken, though not because of some pop psychology side experiment. The bull of the eighties was much more than Gordon Gekko. The BSD’s were all bond traders, not stock jockeys. It was the rise of the quants.

The stock crash was appreciated, then, as a very prominent, in-your-face example of “tail risk.” These extreme outcomes are applicable in all markets. Even the bond desks were sweating heavily in October 1987.

Some that weren’t were those like John Meriwether’s “young professors”, the experience at Salomon Brothers (Solly) as retold by Michael Lewis’ Liar’s Poker. These mathematicians actually foretold the future. And Solly wasn’t alone or unique.

In the aftermath of Black Monday, JP Morgan’s chairman Dennis Weatherstone began to demand a 4:15 update every trading day. He wanted to know just how much the whole “bank” might stand to lose in the next day’s trading based on everything that had happened up to and including the just-completed session. It was the forerunner of value-at-risk, or VaR.

Again, it wasn’t really about share prices or exposures to what was offered at the NYSE. JP Morgan wasn’t itself a stock trader so much as an investment bank exposed to price swings in other markets, those ostensibly called “fixed” income. FICC would often be anything but, and smaller price movements in these places could end your career – or your bank – in a hurry.

Wall Street took its demand to the Ivy League business schools where Economics curriculum had already been evolving toward statistics. Mathematicians would become prized recruits, even (especially) if they had graduated without ever discovering the difference between a bond and a stock. What happened in ’87 was the last push in the direction of complete monetary evolution.

It would achieve its full capacity around 1995 when JP Morgan began offering its mathematical services to the Street, and all over the rest of the growing eurodollar world. RiskMetrics was in huge demand because VaR and other statistical construction tools had become integral pieces of modern bank balance sheet construction.

One contemporary report published in FRBNY’s April 1996 Economic Policy Review magazine described in typical understated fashion the utter revolution that was taking place.

Recognition of these models by the financial and regulatory communities is evidence of their growing use. For example, in its recent risk-based capital proposal (1996a), the Basle Committee on Banking Supervision endorsed the use of such models, contingent on important qualitative and quantitative standards. In addition, the Bank for International Settlements Fisher report (1994) urged financial intermediaries to disclose measures of value-at-risk publicly. The Derivatives Policy Group, affiliated with six large U.S. securities firms, has also advocated the use of value-at-risk models as an important way to measure market risk. The introduction of the RiskMetrics database compiled by J.P. Morgan for use with third-party value-at-risk software also highlights the growing use of these models by financial as well as nonfinancial firms.

It really begins with what seems a simple question. If you have $100 million as a major bank, figuring out what to do with it is almost inconsequential. What if you have $100 billion? Or, more precisely, how do you go from allocating internal resources in increments of tens perhaps hundreds of millions to tens perhaps hundreds of billions? A mistake in the former construction paradigm is a bad day; a mistake in the latter is your last day.

There is also the primary objective to consider, as well. What I mean is that you also have to figure out how to get from tens of millions to tens of billions and then hundreds of billions. Everyone knows that the shortest distance is a straight line, but in terms of “bank” balance sheets in the nineties what is a straight line? There is a steep learning curve and more than that this stuff isn’t really scalable, at least not directly; you can’t just do what you always did times a hundred or a thousand.

VaR and related mathematical governance offered what seemed like a magical answer. It would, in theory, tell you not just how much your bank might be at risk but more than that the most efficient way to put together often very different concepts (as is required for a multi-line financial firm seeking to get involved in all aspects of modern money dealing). Even by the middle nineties, as the FRBNY article spells out, this was already standard procedure. RiskMetrics truly opened up all its potential, good and, ultimately, bad.

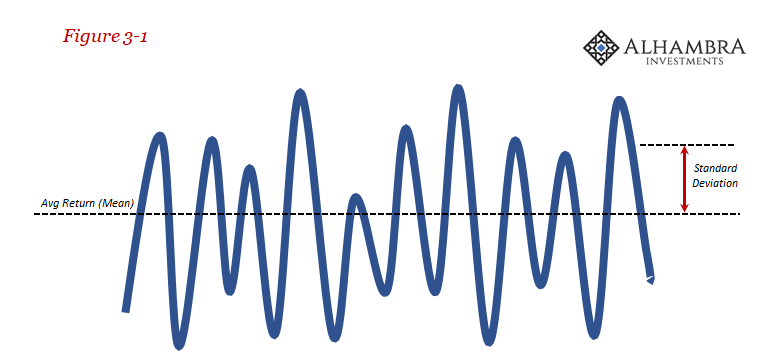

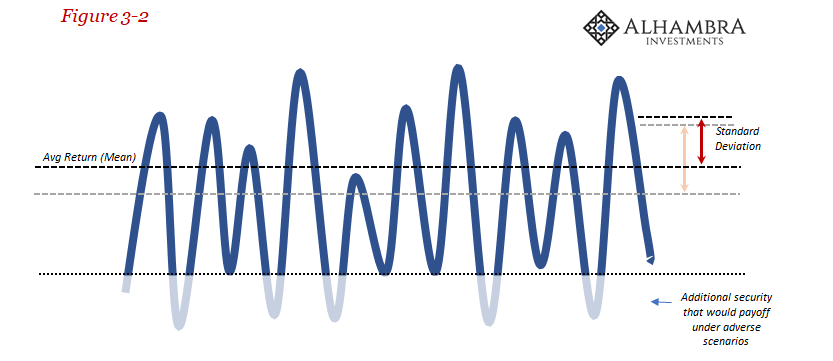

For all the complex regressions and multi-variate non-linear equations embedded within these models, the concepts guiding them are really quite simple. A security (or portfolio of securities) can be thought of as a series of seemingly randomly distributed returns.

We can track the historical returns of any security to come up with an average return and the standard deviation of them (all RiskMetrics did was bootstrap this kind of data for securities and markets that didn’t often or ever trade regularly; this is not to trivialize the accomplishment, rather it was an impressive feat especially for early nineties technology and levels of sophistication). From just those, we can compute VaR.

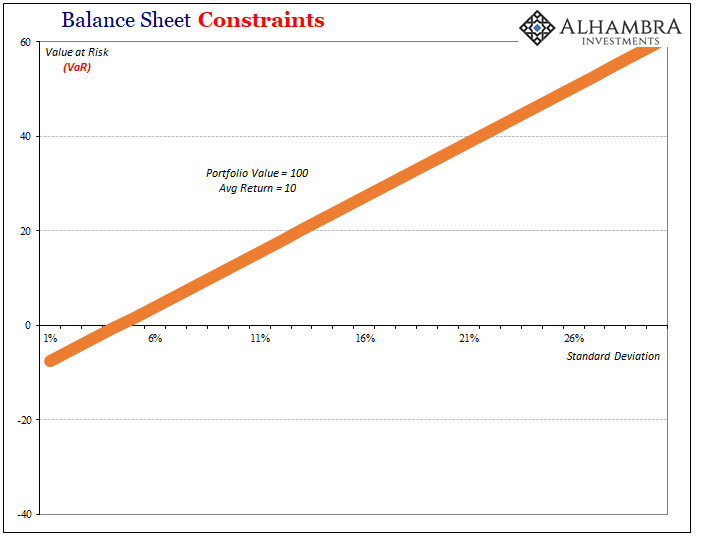

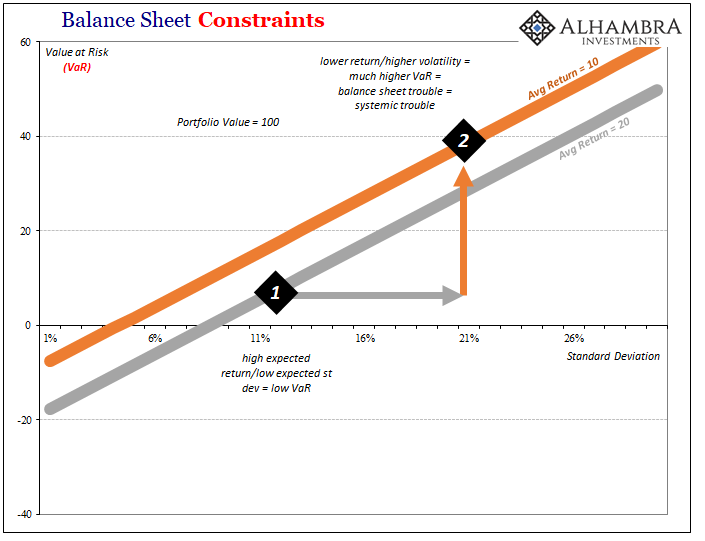

To be clear, these are heavily stylized illustrations that do not correspond to actual data. My goal is merely to illuminate basic concepts rather than to more accurately depict how all this works in the real world (which is where complexity truly gets going; the math that goes into distributions alone can at times become dizzying in their intricacies, shocking in their brilliant elegance). As shown above, a simple portfolio of securities valued at 100 with an average return of 10 produces a VaR number corresponding to whatever standard deviation.

The calculated results follow intuitively – the greater the presumed volatility, standard deviation, the higher the value at risk. We can compute VaR for any timescale, from a daily number to a longer dated one that tries to account for more “tail risk” scenarios. Banks will use combinations of these to construct their VaR “budgets” and to set desk constraints.

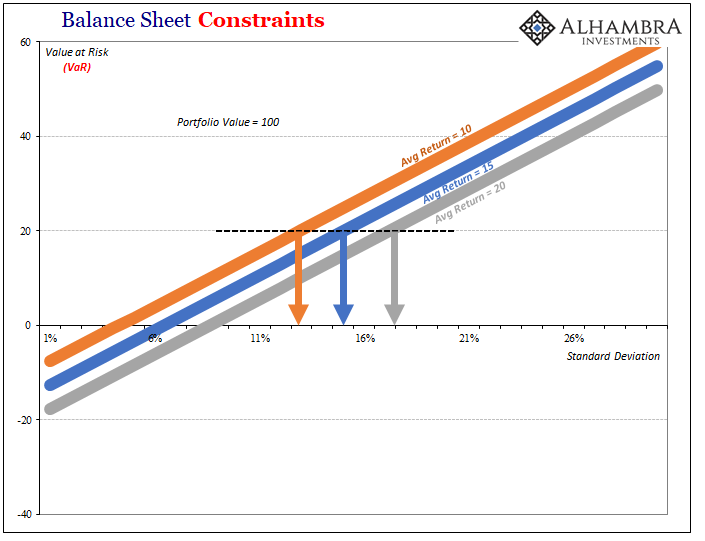

A portfolio of securities that holds a higher average return with a lower computed (or imputed) standard deviation is going to reflect a smaller VaR, meaning that any bank following the math would be able to design its balance sheet more efficiently according to any risk constraints. If your risk budget for VaR is max 20, as shown above, for a security with an average return of 10 the highest allowed standard deviation is little more than 12%; for one with an average return of 20, you could allow for volatility of almost 18%.

The real magic, so to speak, is not just evaluating what’s already out there rather it’s in the ability to be proactive with this information. It’s all well and good that you might be able to differentiate portfolios of securities that could be low VaR specific, the big enchilada is how you might take something high VaR and customize it into low VaR. This is the internal, parallel process to “regulatory capital relief” that in terms of capital ratios allowed banks to be similarly efficient in balance sheet activities.

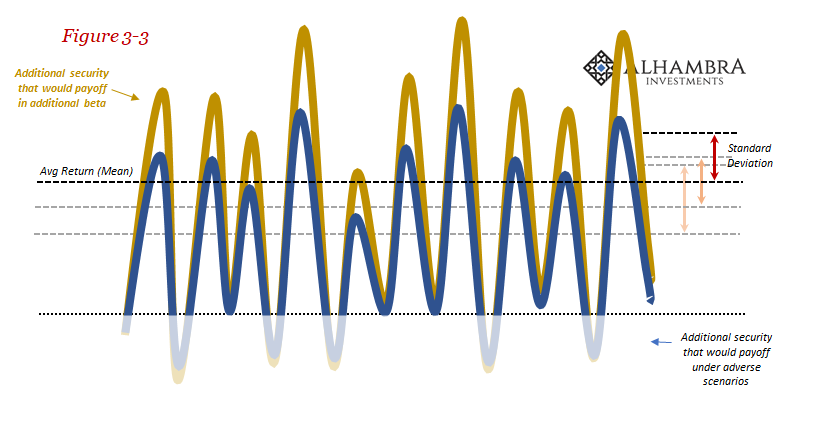

What would happen if we took our original portfolio of securities from Figure 3-1 and assumed there existed some kind of security or insurance that paid out predictably under any adverse scenario? In terms of balance sheet mathematics, it would remove the lower portions of the probability distribution, raising the expected average return while at the same time lowering its standard deviation (expected volatility). This combination would result in a much reduced VaR.

Obviously, these kinds of securities and insurance exist in the form of derivatives of all kinds. If you ever thought to ask yourself why there are so many hundreds of trillions in gross notional derivatives out there, this is primarily the reason (as to why there are so many hundreds of trillions fewer in 2018 than 2008, that’s below). It’s about controlling risk, which is really about controlling the mathematics of balance sheet construction.

There are any number of other forms, too. You can add those that increase the upside potential not just address any downside risks. The average return takes into account the costs associated with adding on hedges and derivative controls, where the object of doing so is to customize a portfolio such that it fits within designed tolerances starting with VaR. You can then take this is a starting point to more comprehensively design a whole balance sheet (portfolio of portfolios) using more complex and deeper mathematics (vega).

By using these techniques, you balance the expected mean against the expected standard deviation where the key word is “expected” – so long as all these continue to operate according to the past.

In that April 1996 FRBNY letter, author Darryll Hendricks foretells the fatal flaw. Tail risks aren’t nearly so straightforward as they are made to be under any standard distribution, even those featuring heavy kurtosis. Most VaR calculations are made using a 95% or 99% confidence interval (to find the “minimum” return for a given time period) but that doesn’t tell us anything about what to expect outside the distribution.

The “minimum” return might be a small loss under the 99% confidence interval, but if markets turn out like the Crash of ’87 then inside that remainder 1% could be conditions that simply wipe you out. At that point VaR or anything else like it is useless.

Hendricks:

The outcomes that are not covered are typically 30 to 40 percent larger than the risk measures and are also larger than predicted by the normal distribution. In some cases, daily losses over the twelve-year sample period are several times larger than the corresponding value-at-risk measures. These examples make it clear that value-at-risk measures—even at the 99th percentile—do not “bound” possible losses

In other words, it all looks good…until it doesn’t.

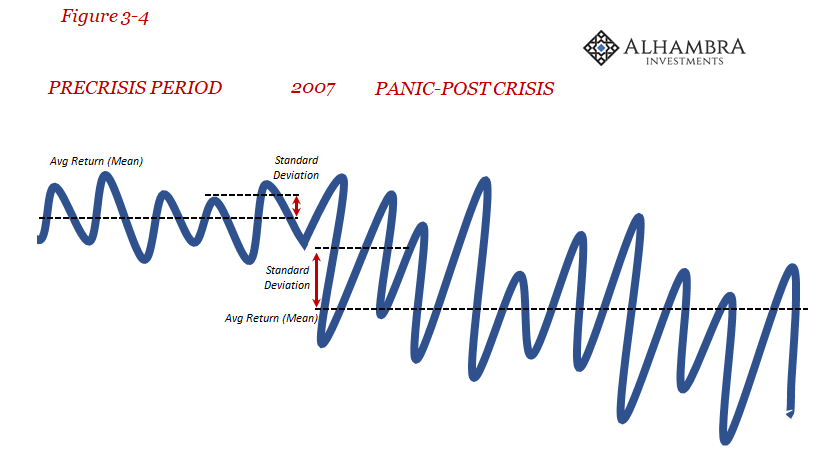

What happens to balance sheet construction when suddenly volatility picks up in an unexpected (even ahistoric) fashion and takes on a heavily downward skew to boot? As we know from the charts above, VaR can only explode higher. If you are caught when out of nowhere the expected average return drops while the standard deviation jumps, the changes in VaR aren’t going to be small. They trigger violations and demand immediate attention, even action.

Under normal conditions, you might simply bite the bullet and go out into the market and buy more protection (Figure 3-2). Doing so would also reduce your expected rate of return by adding the cost of that protection, but it might be worth the expense if it reduces your anticipated standard deviation sufficiently according to VaR,

But what if the cost of additional protection is prohibitive? Or, more troubling, not readily available, hard to find, perhaps even no longer offered by anyone? At that point, you are stuck only with some pretty bad scenarios (including OTTI impairments, write-downs, and firesales).

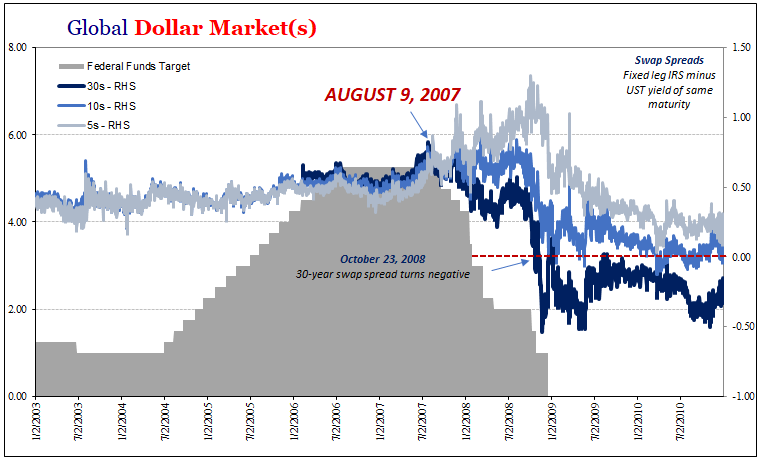

It was one of the most unappreciated aspects of what happened starting in 2007. If you are having trouble with our own portfolios as they related to VaR and other risk constraints, so was everyone else. As you demanded additional risk inputs in the form of whatever derivatives, so did the rest of the system – at the same time you were offering less to the market in the same way as all participants were pulling back (in general, this is what happened in the interest rate swap market, as shown above). Demand up and supply down = no reasonable way to control portfolio math = REAL BAD.

Any central bank is supposed to act as lender of last resort, but lender of what? The answer to that question is money, but what counts as money in this sort of system? Bank reserves? Certainly not.

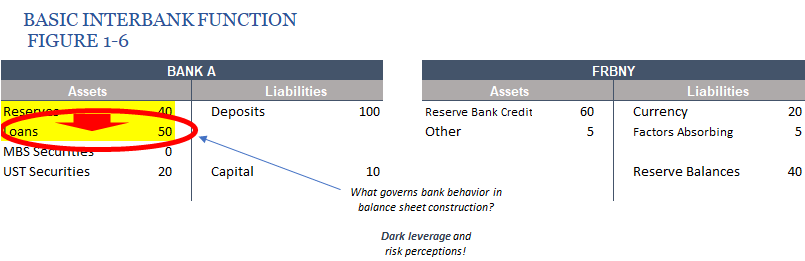

What I mean when I refer to “balance sheet capacity” as money is this sort of stuff that governs how the banking system behaves internally. It’s in every way just like traditional money, pieces of traded liabilities that allow banks to accomplish entirely financial and often monetary processes. It is a form of money multiplier that isn’t recognizable without some kind of useful deconstruction (such as this one).

If you are forced to sell a security at a firesale price because you can no longer predictably anticipate its average returns and volatility, it’s the exact same result as if you had experienced a run on your vault. A run on dark leverage is the same thing just removed to a different, strange realm.

The Panic in 2008 was a run, alright, just not one that is or was immediately recognizable. It was a run on balance sheet capacity; the ability to construct and maintain a bank balance sheet in the manner desired. It explains why the Federal Reserve and all the rest not only failed time and again, they could only fail.

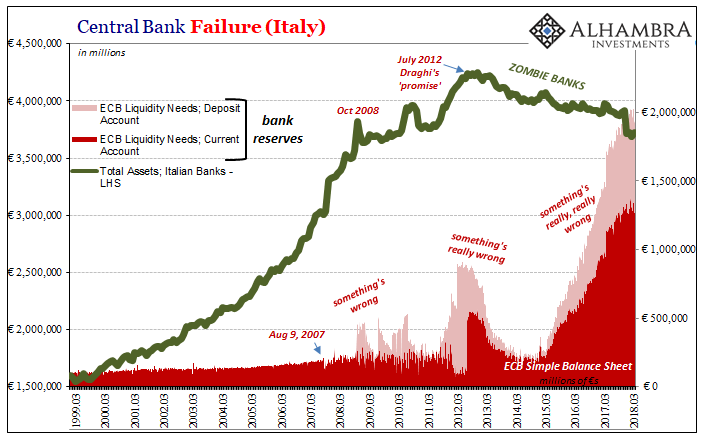

Unfortunately for the world, what happened on August 9, 2007, wasn’t a fit of transitory doubt, it was a paradigm shift in all these points that truly mattered. Balance sheet capacity has become permanently impaired by the revelations of its own internal contradictions (including those spelled out by Mr. Hendricks more than a decade before the crisis). Risks are greater than ever believed and statistically modeled (not just tail risks), and it’s now harder to make the math work like it did before since you can’t do what you used to, and others won’t offer what used to be standard; which makes perceived risk even greater, making it even more difficult to make the numbers work; and so on.

Continued crises after 2008 and these bouts of often extreme volatility (predicated on liquidity risks) have only further hardened this systemic shift.

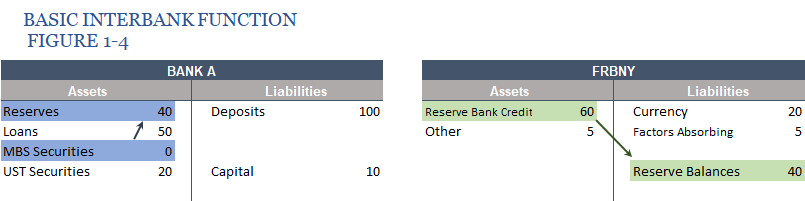

What do large scale asset purchases (LSAP) like quantitative easing (QE) accomplish in this situation? It’s not easing, not for banks where they are meant to have a direct effect. Reserves are nothing more, as pictured before in prior Figure 1-4 (above), than a distracting asset swap. The difficulties in bank balance sheet construction and capacity remain unaltered, even totally unappreciated. When volatility rises, what good are bank reserves?

VaR demands what they can never, ever provide. And “they” is global, from the Federal Reserve to the ECB or SNB or BoE. Eurodollar balance sheet capacity knows no national or currency boundaries. The real money in this fully evolved offshore system is this dark leverage stuff. RiskMetrics was a development for every central bank on the planet to consider; none yet have despite closing in on a quarter century since JPM opened up the whole monetary world to it.

It used to be plentiful, seemingly unlimited. Now it’s not. For all its stunning and beautiful complexity, this lost economic decade is really just that simple. Risk/return; or, rather, expected risk/expected return.

Stay In Touch