Since the Federal Reserve is not in the money business, their recent hawkish shift toward an increasingly anti-inflationary stance is a twisted and convoluted case of subjective interpretation. Inflation is money and if the Fed was a central bank the issue of consumer prices wouldn’t necessarily be simple, it would, however, be much simpler: is there or isn’t there too much money flowing through the economy.

News to the vast majority of the public, no one at any central bank anywhere has a clue as to how to answer the question that way. Instead, Economists have been forced to the rudimentary distillation of economic aggregates, chief among these being the unemployment rate.

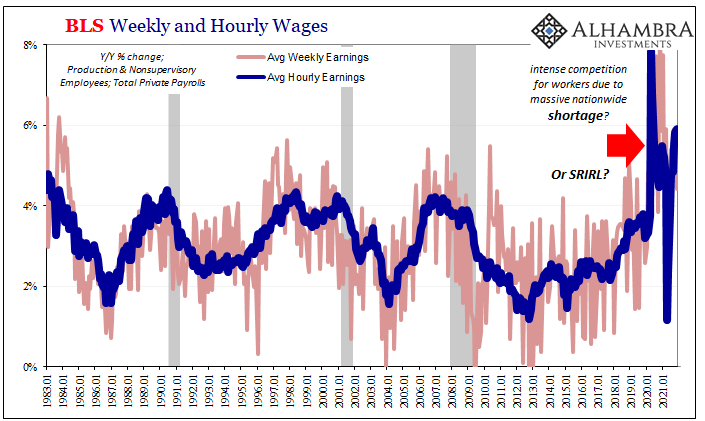

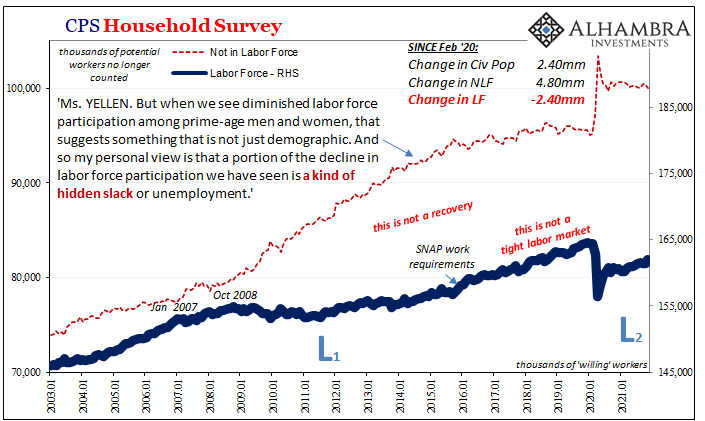

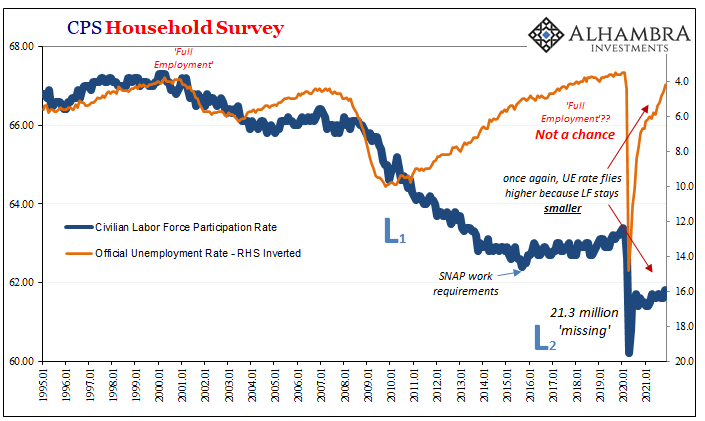

Not really the rate itself, rather what is supposed to happen once that number falls low “enough.” From 2014 onward, we’ve been waiting for the allegedly (despite no evidence) tight labor market to produce the next order effect. Since we just did this not really all that long ago, you might still remember it:

To review once more: unemployment rate says tight labor market which suggests heavy competition for workers, this bidding war drives up wages, companies pass the increased costs to customers, voila, accelerating CPI, or PCE Deflator, which confirms the economy is actually booming and monetary policy a complete, total success.

Here we are all over again, with the Fed combining their interpretation of the (equally evidence-free) psychology behind recent CPI’s along with the once-again rapidly declining unemployment rate (just like 2018-19) to arrive in the area of inflationary danger.

As the latter, it’s all about wages. Should the labor market truly tighten, then competition for workers heats up the market-clearing rate desperate companies would have to pay for them.

Unfortunately for Jay Powell’s FOMC, as it debates (I think for the Committee the double taper and triple rate hike show it has been settled in their collective minds) the genesis for any inflationary pressures (real or imagined) the situation is even more complicated this time (2022) when compared to last time (2018-19).

Without any monetary proficiency, and with the unemployment rate having been misleading so much from way back in 2014 when this same discussion really got started, it should be easy enough to consult with the labor statistics to reconcile the wage issue to either confirm or again deny the unemployment rate.

In other words, maybe the unemployment rate was wrong for years before, but this time could it be useful? Wages should settle the matter.

Except if wage data isn’t agreeing with the low unemployment rate, rather in what’s instead historically deflationary fashion; yes, no mistake, deflationary.

What most people know of the Great Depression is most often limited to FDR, the New Deal, and 1929’s stock market (once again, Economics has left the public with a fog of confusion). One of the more profound and confounding results produced by the deflationary outburst behind the 1930’s was how wage rates, especially during the worst of them, went up by a lot.

The worst economic contraction in modern history, the United States, in particular, seeing millions upon millions thrown out of work in a relentless destruction of income potential. The ranks of the employed crashed by an unimaginable degree, various measures of unemployment citing an ultimate peak of perhaps 25% to 30% or even more of all available workers.

And yet, while the number of payrolls were being decimated, the estimated real wage skyrocketed. Yes, you read that correctly; the more millions left for the charity of soup-lines before starvation, the higher the real wage rate had climbed. This head-scratching result even has a fancy name: SRIRL, or short-run increasing returns to labor.

There have been several explanations put forward over the decades since the Great Depression to account for these specific outcomes and among the most accepted are labor hoarding as well as survivors’ bias.

Taking the second one first, studies in the eighties (under a revival of Depression-era interest using disaggregated, micro-level data) found that, among other things, much of the output and contraction took place as firms simply went out of business; they didn’t survive the prolonged contraction.

According to several of these, including one such of the all-important motor vehicle industry (‘Wage Rigidity’ in the Depression: Concept or Phrase?, mimeo, Department of Economics, Wesleyan University, September 1989) firms that exited, as you might expect, tended to be of lower productivity and lower wages replaced eventually by higher paying and more productive ones (technology shock). It was figured that about a third of the drop in output from 1929 to 1933 was due to permanent plant closures.

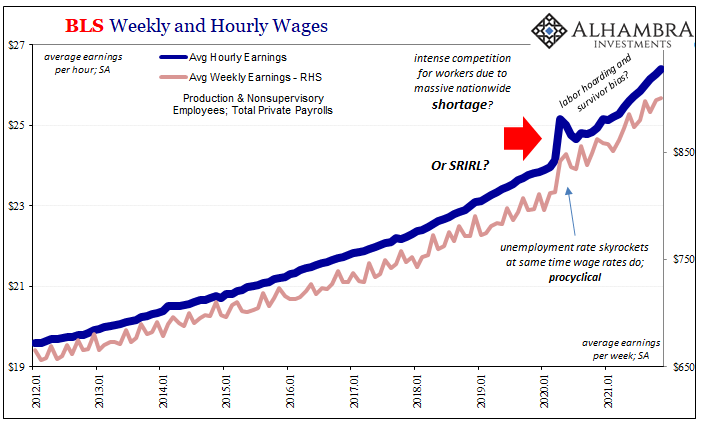

What might happen in the 21st century if the government arbitrarily closed down especially services parts of the system? Should quite a lot of them stayed closed down especially if they offered lower pay opportunities then the published wage data might not accurately reflect the actual deflationary situation. The wage data gets skewed by the survivors.

Thus, higher published or estimated aggregate wage levels then perhaps as now weren’t and wouldn’t be consistent with the inflationary wage conditions of a period like the seventies.

Moreover, firms also exhibited a consistent tendency across industries to hold what amounted to a labor reserve. As Ben Bernanke (yes, that guy) wrote in one of his more famous papers (Employment, Hours, and Earnings in the Depression: An Analysis of Eight Manufacturing Industries; American Economic Review, March 1986), firms do express a clear preference when confronted with choosing “between receiving one hour of work from eight different workers and receiving eight hours from one worker.”

In other words, as output/revenue declines businesses have to scale down their labor use; they must cut hours to match actual output just to survive. Beyond a certain point – a different one for each individual business – hours can’t be cut back any further, or at least not one-to-one with falling output and revenue.

Companies have to pay workers some minimum level to retain them regardless if they have the work available at that time; to keep up a necessary minimum weekly pay, companies are working workers less but paying them, essentially, for hours they might not actually work just to keep them around.

As a consequence, the aggregated hourly rate goes up because it cannot account for what is decidedly non-inflationary hidden slack.

There is another kind of survival bias as individual businesses were also found to have substantially favored higher skilled therefore higher paid employees when selecting for this labor reserve; better to keep around those who had already demonstrated their value to employers even if it means paying a relatively higher rate as far more low-paying workers get laid off.

Which also sounds very familiar.

Evidence for historical labor hoarding has been found all over; for example, another Bernanke paper this time co-authored with Martin Parkinson (Procylical Labor Productivity and Competing Theories of the Business Cycle: Some Evidence from Interwar U.S. Manufacturing Industries; Journal of Political Economy, June 1991).

Since its discovery by Hultgren (1960), the procyclical behavior of average labor productivity, also known as short run increasing returns to labor (SRIRL), has achieved the status of a basic stylized fact of macroeconomics. The ubiquitous nature of procyclical productivity has been confirmed by studies at levels of aggregation ranging from the firm to the national economy, and for a variety of countries and sample periods.

There’s quite a lot more going on in detail, but for our discussion a cursory review will suffice to introduce these substantial doubts. Why bring up SRIRL?

To start with, the biggest jump in aggregate wage rates occurred at the same time the unemployment rate skyrocketed in March 2020; procyclical from the very start, just like the thirties.

Also like the Great Depression, published wages then, as I recalled last week, “stayed high for the rest of the decade despite its lack of recovery and massive, depression-within-a-depression setback in 1937 and 1938.”

History appears to be repeating. I’d even written about this possibility back during the earliest days of “recovery” in August 2020 when there was already plausible secondary evidence available then:

Before even getting to July [2020], this divergence between hours and headline payrolls had already suggested that companies may have been holding on to more workers than the decline in output would’ve demanded. In other words, the level of output and actual work performed had declined more than the reduction in headcounts, by a lot more, leaving us to suspect businesses were holding back a sort of reserve of their own workers (who were still on the books but idle nonetheless) having them at-the-ready for when reopening got started.

This plus any further survivor selection bias would quite neatly account for published wage rates that are unlike anything since, well, maybe the thirties. Productivity, too.

The FOMC seems to be looking at this same data and concluding the increases are “real”, rejecting the very strong possibility of being misled when the data along with history strongly indicate SRIRL. This might then mean, if true, double taper and triple rate hikes (for 2022, allegedly) are being driven by more bad economics (small “e”) despite the fact SRIRL is a creature of and by the same Economics practiced at the Fed.

Misreading inflationary wages for potentially deflationary labor hoarding, this would be another huge mistake along the same lines of bad subjective interpretation officials have made time and time and time again; most recently 2019’s LABOR SHORTAGE!!!! The Fed’s bias on the economy always is, despite all that average inflation targeting nonsense, highly inflationary.

The economy’s, however, despite the wage data (actually, consistent with the wage data as well as the participation figures) may still not be anything close.

Stay In Touch